Introduction to Responsible AI

26.79k visualizzazioni1237 ParoleCopia testoCondividi

Google Cloud Tech

In this video, learn about the importance of responsible AI, and how Google implements responsible A...

Trascrizione del video:

hello and welcome to introduction to responsible AI this course will help you understand why Google has put AI principles in place identify the need for a responsible AI practice within an organization recognize that decisions made at all stages of a project have an impact on responsible AI and recognize that organizations can design AI to fit their own business needs and values many of us already have daily interactions with artificial intelligence or AI from predictions for traffic and weather to recommendations for TV shows you might like to watch next as AI becomes more common many technologies

that aren't AI enabled may start to seem inadequate now ai systems are enabling computers to see understand and interact with the world in ways that were unimaginable just a decade ago and these systems are developing at an extraordinary pace yet despite these remarkable advancements AI is not infallible developing responsible AI requires an understanding of the possible issues limitations or unintended consequences technology is a reflection of what exists in society so without good practices AI May replicate existing issues or bias and amplify them but there isn't a universal definition of responsible AI nor is there a

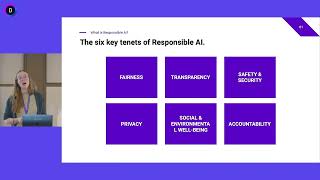

simple checklist or formula that defines how responsible AI practices should be implemented instead organizations are developing their own AI principles that reflect their mission and values while these principles are unique to every organization if you look for common themes you find a consistent set of ideas across transparency fairness accountability and privacy at Google our approach to responsible AI is rooted in a commitment to strive towards AI That's built for everyone that's accountable and safe that respects privacy and that is driven by scientific excellence we've developed our own AI principles practices governance processes and tools that

together embody our values and guide our approach to responsible AI we've Incorporated responsibility by Design into our products and even more importantly our organization like many companies we use our AI principles as a framework to guide responsible decision making we all have a role to play in how responsible AI is applied whatever stage in the AI process you're involved with from design to deployment or application the decisions you make have an impact therefore it's important that you too have a defined and repeatable process for using AI responsibly there's a common misconception with artificial intelligence that

machines play the central decision-making role in reality it's people who design and build these machines and decide how they're used people are involved in each aspect of AI development they collect or create the data that the model is trained on they control the deployments of the AI and how it's applied in a given context essentially human decisions are threaded throughout our technology products and every time a person makes a decision they're actually making a choice based on their own values whether it's the decision to use generative AI to solve a problem as opposed to other

methods or anywhere throughout the machine learning lifecycle that person introduces their own sets of values this means that every decision Point requires consideration and evaluation to ensure that choices have been made responsibly from concept through deployment and maintenance because there's potential to impact many areas of society not to mention people's daily lives it's important to develop these Technologies with ethics in mind responsible AI doesn't mean to focus only on the obviously controversial use cases without responsible AI practices even seemingly innocuous AI use cases or those with good intent could still cause ethical issues or unintended

outcomes or not be as beneficial as they could be ethics and responsibility are important not least because they represent the right thing to do but also because they can guide AI design to be more beneficial for people's lives at Google we've learned that building responsibility into any AI deployment makes better models and builds trust with our customers and our customers customers if at any point that trust is broken we run the risk of AI deployments being stalled unsuccessful or at worst harmful to stakeholders those products affect this all fits into our belief at Google that

responsible AI equals successful AI we make our products and business decisions around AI through a series of Assessments and reviews these instill rigor and consistency in our approach across product areas and geographies these assessments and reviews begin with ensuring that any project aligns with our AI principles while AI principles help ground a group in shared commitments not everyone will agree with every decision made about how products should be designed responsibly this is why it's important to develop robust processes that people can trust so even if they don't agree with the end decision they trust the

process that drove the decision in June 2018 we announced seven AI principles to guide our work these are concrete standards that actively govern our research and product development and affect our business decisions here's an overview of each one AI should be socially beneficial any project should take into account a broad range of Social and economic factors and will proceed only where we believe that the overall likely benefits substantially exceed the foreseeable risks and downsides two AI should avoid creating or reinforcing unfair bias we seek to avoid unjust effects on people particularly those related to sensitive

characteristics such as race ethnicity gender nationality income sexual orientation ability and political or religious belief three AI should be built and tested for safety we will continue to develop and apply strong Safety and Security practices to avoid unintended results that create risks of harm 4. AI should be accountable to people we will Design AI systems that provide appropriate opportunities for feedback relevant explanations and appeal five AI should incorporate privacy design principles we will give opportunity for notice and consent encourage architectures with privacy safeguards and provide appropriate transparency and control over the use of data 6.

AI should uphold high standards of scientific excellence we all work with a range of stakeholders to promote thoughtful leadership in this area drawing on scientifically rigorous and multi-disciplinary approaches and we will responsibly share our knowledge by publishing educational materials best practices and research this enable more people to develop useful AI applications 7. AI should be made available for uses that Accord with these principles many technologies have multiple uses so will work to limit potentially harmful or abusive applications in addition to these seven principles there are certain AI applications that we will not pursue we will

not design or deploy AI in these four application areas technologies that cause or are likely to cause overall harm weapons or other Technologies whose principal purpose or implementation is to cause or directly facilitate injury to people technologies that gather or use information for surveillance that violates internationally accepted norms and Technologies whose purpose contravenes widely accepted principles of international law and human rights establishing principles was a starting point rather than an end what remains true is that our AI principles rarely give us direct answers to our questions on how to build our products they don't and

shouldn't allow us to sidestep hard conversations they are a foundation that establishes what we stand for what we build and why we build it and they are core to the success of our Enterprise AI offerings

Video correlati

9:38

Introduction to Responsible AI

Google Cloud

38,613 views

22:08

Introduction to Generative AI

Google Cloud Tech

1,836,837 views

6:10

What is AI Ethics?

IBM Technology

104,771 views

48:31

Responsible AI: From theory to practice

Google Cloud Tech

7,991 views

7:33

Top 10 ChatGPT Use Cases For Beginners - H...

MaxonShire

5,124 views

13:54

Microsoft #TechTalk: Responsible AI

Microsoft Europe

4,626 views

13:12

"Godfather of AI" Geoffrey Hinton: The 60 ...

60 Minutes

2,072,042 views

13:13

MLOps in the era of GenAI

Google Cloud Tech

4,596 views

22:02

Introduction to Generative AI

Google Cloud

300,597 views

10:19

AI Is Dangerous, but Not for the Reasons Y...

TED

1,143,063 views

12:29

What are AI Agents?

IBM Technology

565,553 views

13:13

How to Secure AI Business Models

IBM Technology

26,643 views

37:02

Building Responsible AI Best Practices Acr...

Data Council

3,937 views

15:46

Introduction to large language models

Google Cloud Tech

741,409 views

52:00

Unreasonably Effective AI with Demis Hassabis

Google DeepMind

210,433 views

26:24

Deploying Agentic AI to Navigate Industria...

Industrial AI Federation

4,980 views

9:35

The most important AI trends in 2024

IBM Technology

253,983 views

11:37

What is RAG? (Retrieval Augmented Generation)

Don Woodlock

159,933 views

9:11

Transformers, explained: Understand the mo...

Google Cloud Tech

959,951 views

24:52

Responsible AI

INSEAD

4,491 views